One Year of Vibe Coding: 878 Sessions Later

399 days, 878 sessions, 52.9k tool calls. What a year of talking to AI instead of writing code taught me about productivity, creativity, and the craft of programming itself.

A year ago I opened a terminal and typed a vague instruction to an AI. I wanted a portfolio website. I didn’t write a single line of code. Four sessions later, this website existed.

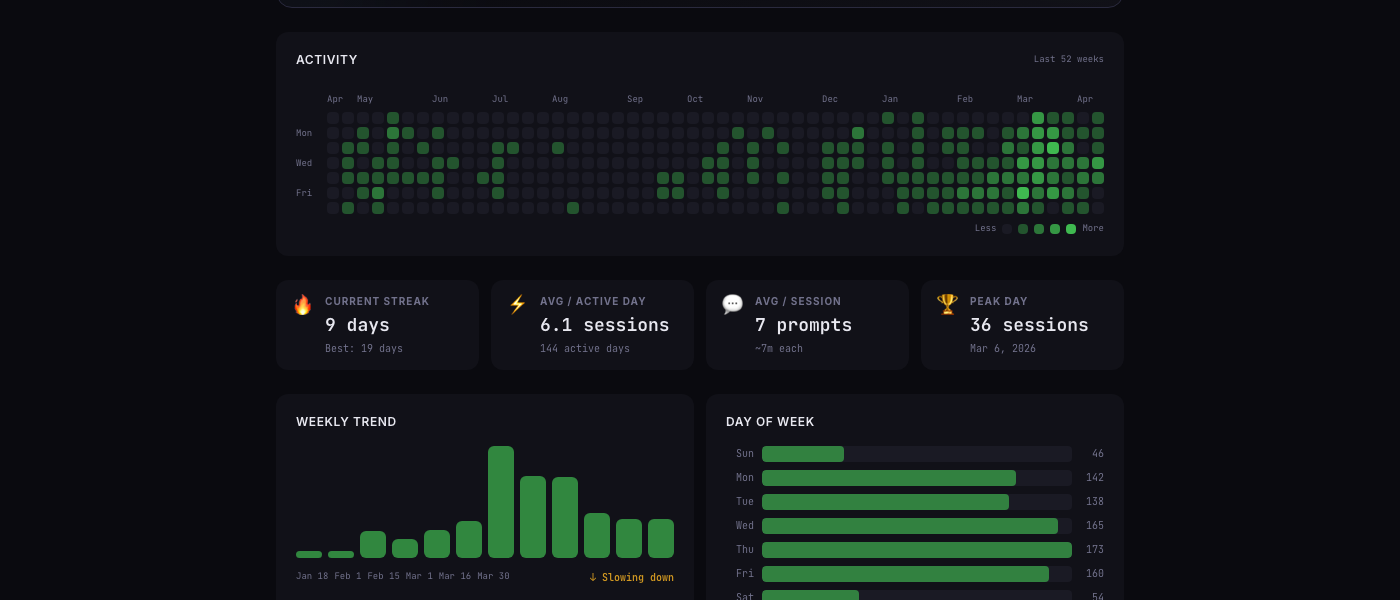

That was August 2025. Since then, I’ve had 878 AI coding sessions across 399 days. 6,046 prompts. 52,900 tool calls. 9,852 file edits. If you add up all the session durations, the total comes to 4 days and 1 hour.

I know these numbers because I built vibe-replay, a tool that turns AI coding sessions into interactive replays and analytics. The irony isn’t lost on me — I built a tool to understand my own vibe coding habits, and the tool itself was vibe coded.

But this isn’t about the tool. This is about what a year of this workflow actually looks like from the inside.

The before and after

I’ve been a software engineer for over a decade. I know how to write code. I can reason about systems. I have opinions about architecture.

And yet, somewhere around October 2025, I stopped writing code almost entirely.

Not on purpose. It happened gradually. A quick Claude Code session here. A Cursor autocomplete there. Then one day I realized I hadn’t manually typed a function signature in weeks. My fingers had shifted from writing code to writing prompts.

The strange part? My output increased. Not marginally — by a factor I’m still trying to quantify. Projects that would have taken a weekend shipped in an afternoon. Ideas that I would have filed away as “someday” actually got built.

This website. vibe-replay. A VB6 game resurrection. A 3D character renderer. Slack bots. Database migrations. CI pipelines. All of it, mostly through conversation.

What the data says

When you have 878 sessions of data, patterns emerge that you’d never notice day to day.

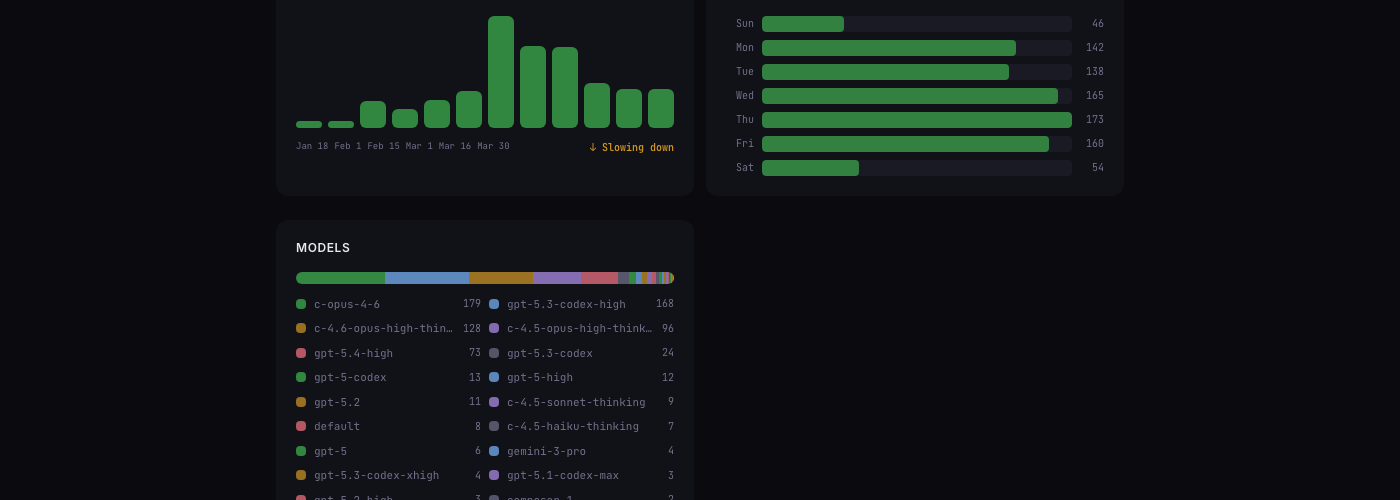

I’m a weekday coder. Thursday is my most active day with 173 sessions. Saturday drops to 54 — a third of that. Sundays are even lower. I thought I was a “nights and weekends” builder. The data says otherwise: I do my best AI-assisted work during structured time, not in stolen hours.

Sessions are short and focused. Average: 7 prompts, ~7 minutes. I’m not having hour-long conversations. I open a session, state a goal, iterate briefly, and close it. Think “sprints,” not “marathons.”

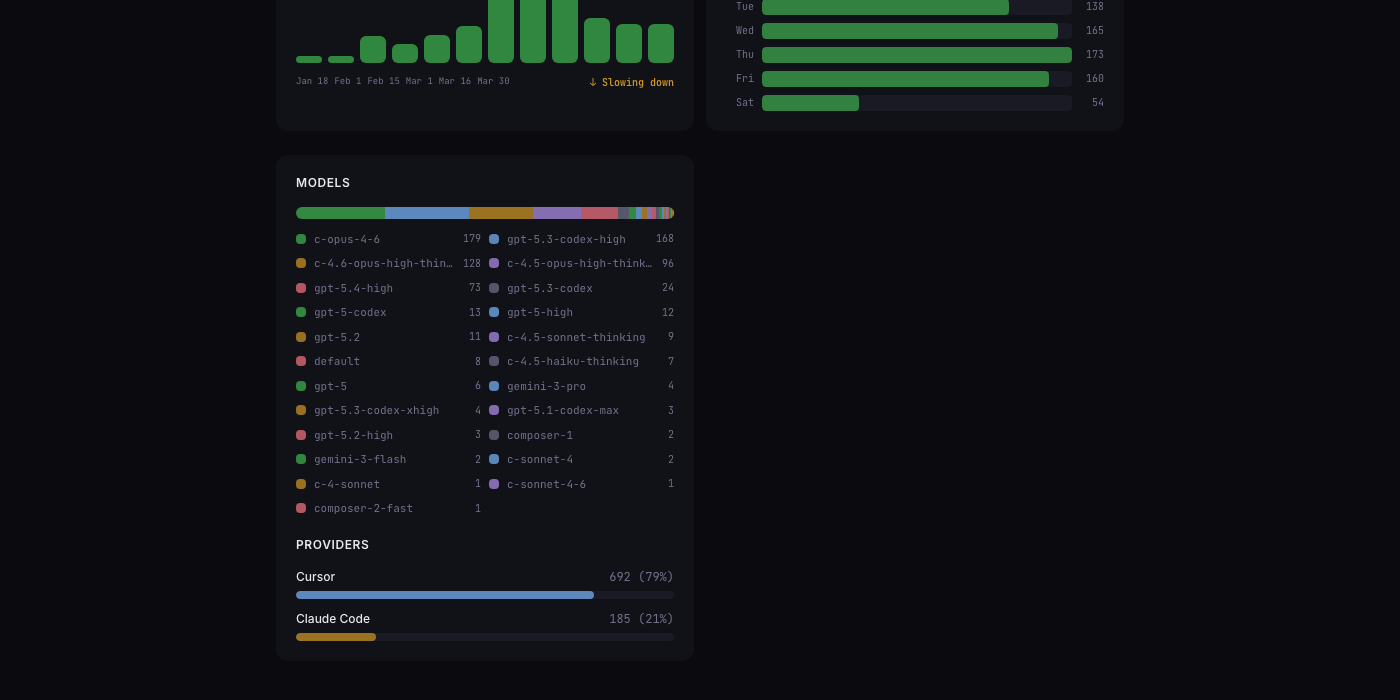

I’m model-promiscuous. 20+ distinct models across Claude, GPT, and Gemini families. claude-opus-4-6 leads with 179 sessions, but gpt-5.3-codex-high is close behind at 168. I use Claude Code for deep, agentic work — the kind where the AI needs to read 15 files and make coordinated changes. Cursor handles quick edits and explorations. The 79/21 Cursor-to-Claude-Code split surprised me. I thought I was a Claude Code purist. The data disagrees.

My peak day was March 6, 2026: 36 sessions. That was the week I was polishing vibe-replay’s sharing feature. I remember that day as productive but not extraordinary. Thirty-six sessions didn’t feel like thirty-six sessions. That’s the thing about short, focused bursts — they don’t accumulate into fatigue the way a single long coding session does.

If you’re curious, here’s my full insights page — contribution heatmap, model breakdowns, streaks, all of it.

Five things I didn’t expect

1. The hard part isn’t prompting — it’s knowing what to build

Everyone talks about prompt engineering. After 6,046 prompts, I can tell you: the prompt is the easy part.

The hard part is the five minutes before the prompt. What’s the actual problem? What does “done” look like? What should I not build?

I’ve caught myself multiple times mid-session, watching an AI cheerfully implement a feature I never should have asked for. The execution speed is so intoxicating that you can build the wrong thing faster than you can realize it’s the wrong thing.

The most valuable prompt I use is some variation of: “Wait. Before we build this, what are we actually solving?“

2. Reading code became more important, not less

I write less code. I read far more.

Every AI response is a code review. Every generated function needs to be understood, not just accepted. When Claude Code makes 447 tool calls in a single session (which it has), I still need to understand what was built. I still need to catch the subtle bug in line 47 that the AI didn’t notice.

My code reading speed has improved dramatically this year. My code writing speed hasn’t — because I barely exercise it anymore.

3. Debugging changed shape

The old debugging loop: read error → form hypothesis → add log statement → reproduce → iterate.

The new debugging loop: paste error → describe what should happen → let AI propose a fix → evaluate the fix → accept or redirect.

It’s faster. But here’s what I didn’t expect: I’ve gotten worse at the manual version. Last month I had to debug something on a machine without AI access (a production server, SSH only). I stared at a stack trace for an embarrassingly long time before I remembered how to think through it step by step.

There’s muscle memory you lose when you outsource a cognitive process. I’m not sure how to feel about that yet.

4. The 30-day hole

Claude Code deletes session JSONL files after 30 days. I didn’t know this until I built vibe-replay and discovered months of history had vanished. July through December 2025 — gone. My Cursor sessions from that era survived (Cursor keeps SQLite databases indefinitely), but my Claude Code conversations are lost forever.

This is why I wrote about the local storage differences between the two tools. Understanding how your tools manage data isn’t just academic — it determines what evidence of your work survives.

The sparse early months on my heatmap aren’t because I was coding less. They’re because the data was deleted before I thought to preserve it.

5. Collaboration with AI is a skill that compounds

My first vibe coding session produced a functioning but generic website. My recent sessions produce exactly what I want in a fraction of the time. Not because the AI got better (though it did), but because I got better at communicating with it.

I’ve learned to:

- Front-load context instead of dripping it in

- Describe what I want in terms of existing patterns in the codebase

- Batch related changes into single prompts (my record: 10 UX improvements in one go)

- Recognize when the AI is heading down a wrong path early, before it generates 200 lines of code I’ll throw away

These aren’t skills you learn from a tutorial. They develop through repetition. 878 sessions of repetition.

What I’m still figuring out

A year in, I have more questions than answers.

How do you maintain deep understanding? When AI writes most of your code, how do you stay sharp enough to review it critically? I try to read every diff carefully. Some days I’m disciplined. Some days I approve a 300-line change with a glance.

Where’s the ceiling? My sessions are getting shorter and more focused. Am I approaching an asymptote, or is there another order-of-magnitude improvement ahead? The jump from GPT-4 to Claude Opus was significant. What happens with the next generation?

What happens to junior developers? I learned to code by writing bad code and debugging it. If you skip that struggle, do you develop the same intuitions? I genuinely don’t know, and I think the industry doesn’t either.

Am I building a dependency? That debugging session on the production server rattled me. If these tools disappeared tomorrow, could I go back to the old way? Probably. But it would feel like switching from a power tool back to a hand saw.

The year in three sentences

I typed prompts instead of code for 399 days. My output went up, my keystrokes went down, and my understanding of what I actually do as an engineer shifted permanently. The bottleneck was never typing speed — it was always clarity of thought.

878 sessions tracked with vibe-replay. All numbers verified from local session data. If you want to see your own patterns: npx vibe-replay.